This is a two-part guide for programming the AR Drone 2.0 using JavaScript and Node.js. Read more about building a custom app to configure and control your drone in Part 1.

In Part 2, we ll cover the drone s OS, camera, Azure cognitive services, and how to Telnet to the drone from a web browser, among other topics.

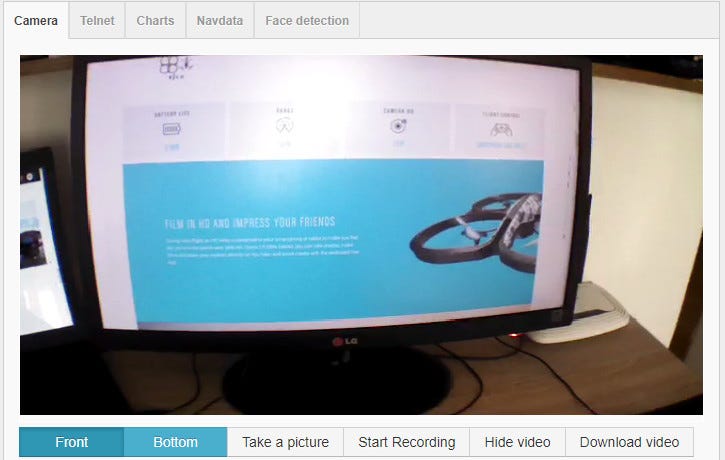

Camera

When it comes to video/image rendering we have a few options (we mentioned one of the options in the first part of this blog). I chose node-dronestream library because it is pretty much stable and great when it comes to handling lost connections (recovery of lost connections).

Client-side:

new NodecopterStream(document.getElementById("placeholder"), {

hostname: "localhost",

port: 3008

});Server-side:

var config = require("./../config").droneServices.camera;

function startCameraStream() {

require("dronestream").listen(config.port);

}

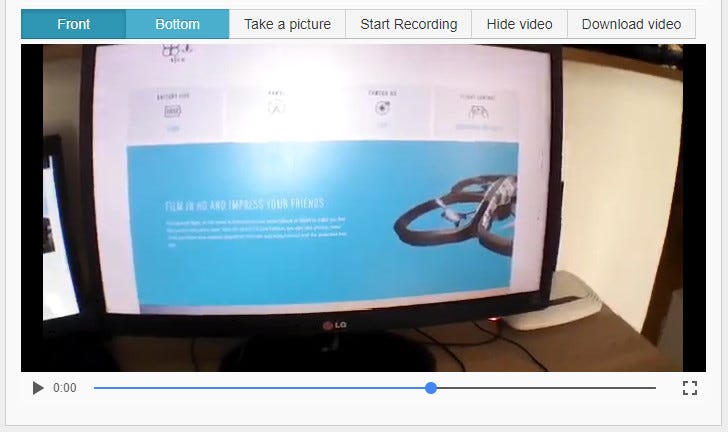

module.exports = { startCameraStream };I added the option to record/play and save the video as well:

I implemented video recording using theMedia Stream Recording API.

The captureStream() method of this API makes it possible to capture a media stream from <canvas>, <audio> or <video> elements. In our case, the node-dronestream library uses a canvas to display a camera stream in a web browser.

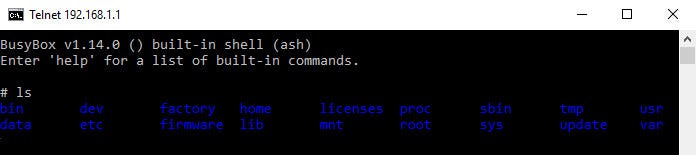

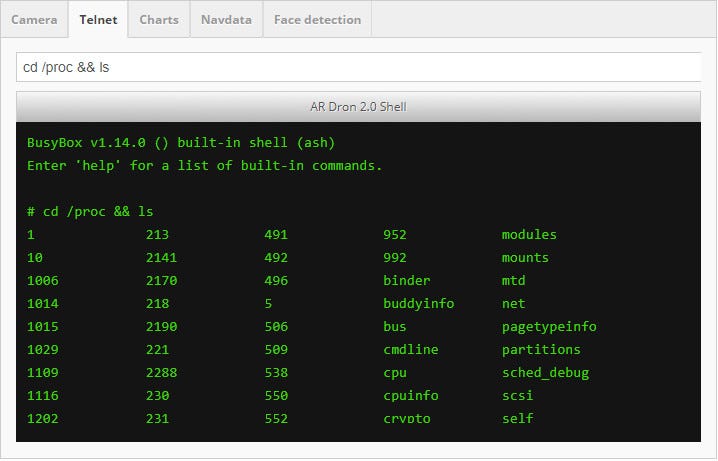

The drone OS

As previously mentioned, AR Drone is running on BusyBox (v1.14.0) Linux distribution. BusyBox is software that provides several stripped-down Unix tools in a single executable file. It runs in a variety of POSIX environments such as Linux, Android, and FreeBSD, although many of the BusyBox tools are designed to work with interfaces provided by the Linux kernel. It was specifically created for embedded operating systems with very limited resources.

The authors dubbed it “The Swiss Army knife of Embedded Linux”, as the single executable replaces basic functions of more than 300 common commands.

What does this mean? Well, it means that AR Drone 2.0 is basically acting as a small flying server! It has its own DHCP, FTP, and Telnet server.

As soon as the device is connected with the drone, it will get an IP address.

If go one step further and scan drone with Nmap tool:

nmap -O

Starting Nmap 7.60 at 2017-09-28 23:09 Central European Daylight Time

Nmap scan report for (192.168.1.1)

Host is up (0.012s latency).

Not shown: 997 closed ports

PORT STATE SERVICE

21/tcp open ftp

23/tcp open telnet

5555/tcp open freeciv

MAC Address: A0:14:3D:A7:9A:59 (Parrot SA)

Device type: general purpose

Running: Linux 2.6.X

OS CPE: cpe:/o:linux:linux_kernel:2.6

OS details: Linux 2.6.19 - 2.6.36

Network Distance: 1 hop

OS detection performed.

Nmap done: 1 IP address (1 host up) scanned in 13.92 secondswe ll see that FTP and Telnet services are opened on port 21/23. Now let’s try to connect to the drone and explore it.

Open a command prompt and run the following command:

telnet (192.168.1.1)We ll get confirmation that we are successfully connected to the drone:

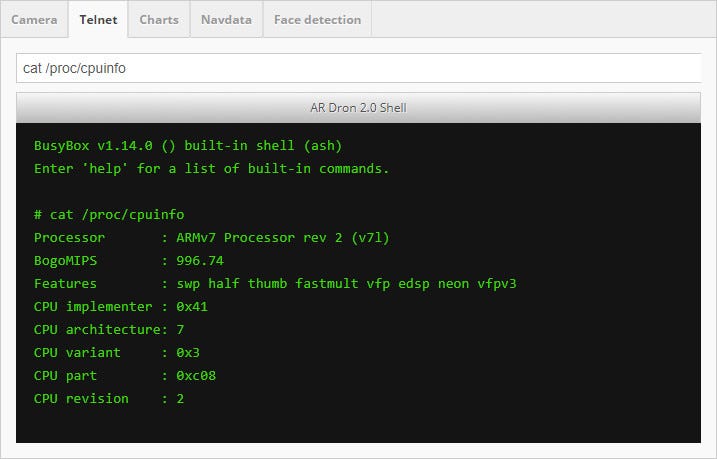

Now we can pull some interesting information, but let s do this from the web application.

I created a web-based terminal. With the following command, we ll get the drone CPU information:

We can execute pretty much any Linux command and chain the commands.

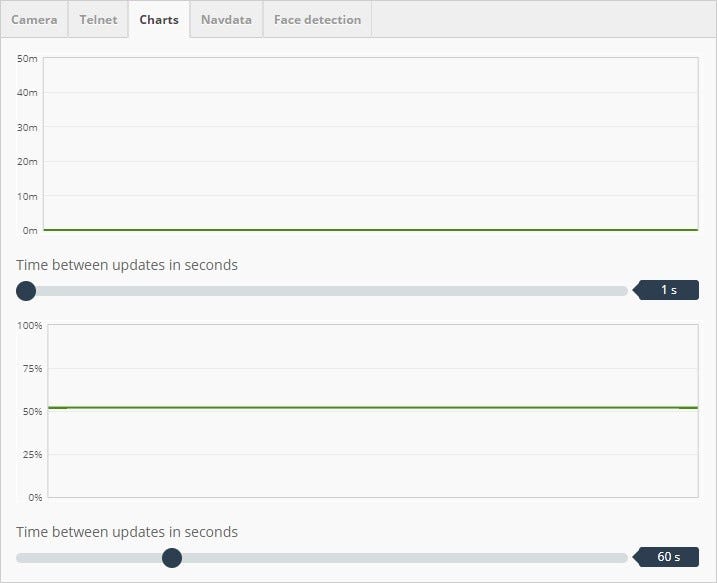

Real-time charts (altitude & battery)

These are simple charts for displaying altitude and battery level.

Raw navdata

I already mentioned that the drone is emitting a lot of navigation data on port 5554. I added the most useful parameters on the UI cockpit panel, but here we are getting raw navdata. We can use these to monitor drone behavior:

Face detection

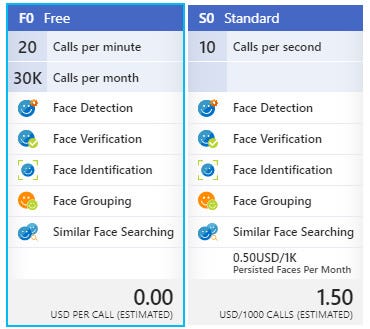

There are numerous options today when it comes to cognitive services. Both Microsoft and Amazon offer face detections as SaaS (Software as a Service). I used the Microsoft Azure platform and facial recognition API for face detection.

Microsoft offers a 30-days free trial for any service, but if you feel like this is not enough time for you, you can sign up for a pay-as-you-go option to get a free plan (you will need to register with your credit card at this point). Their free plan is, in my opinion, quite enough for small apps and POC projects:

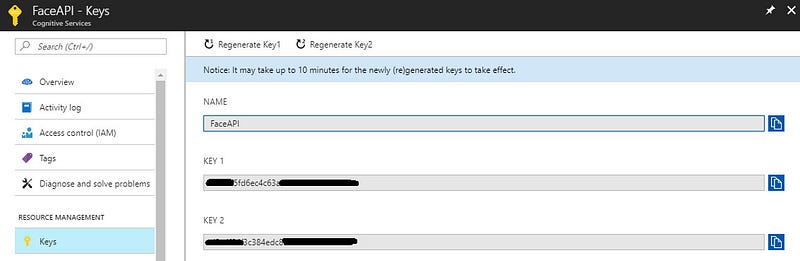

Following this, we just need to grab our API key and we are ready to go!

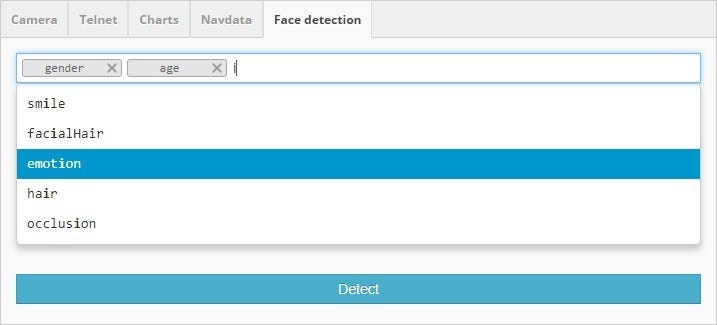

I created a simple interface for API configuration. There are various parameters we can configure, such as age, gender, head pose, smile, facial hair, glasses, emotion, hair, makeup, occlusion, accessories, blur, exposure, noise, etc:

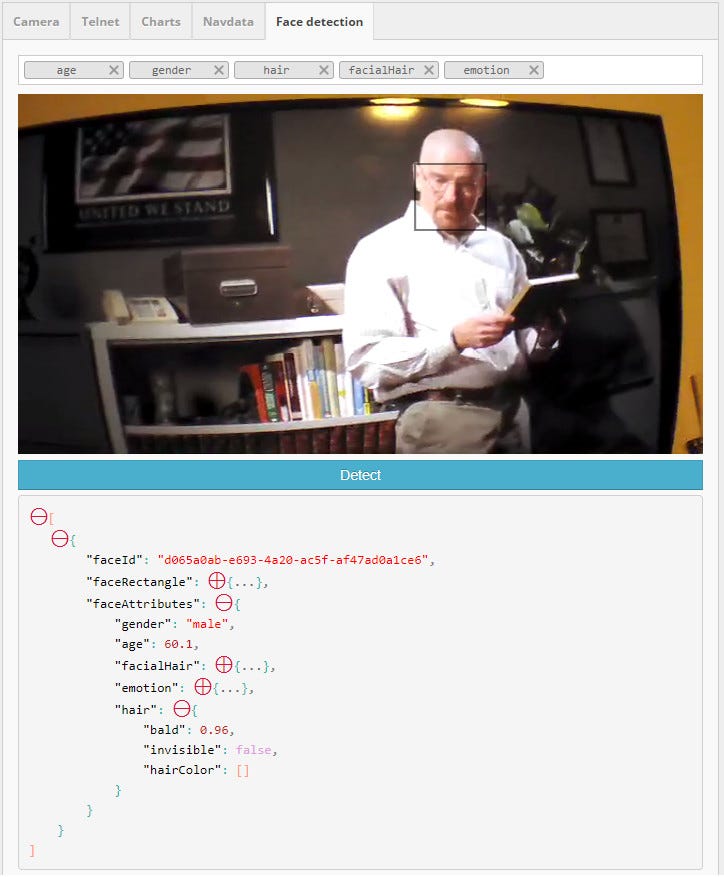

Here s a preview of the result for the image taken with the drone camera:

We will also get a few additional parameters (faceId & faceRectangle).The FaceId is used for comparing different persons and faceRectangle can be used to mark face on the input image.

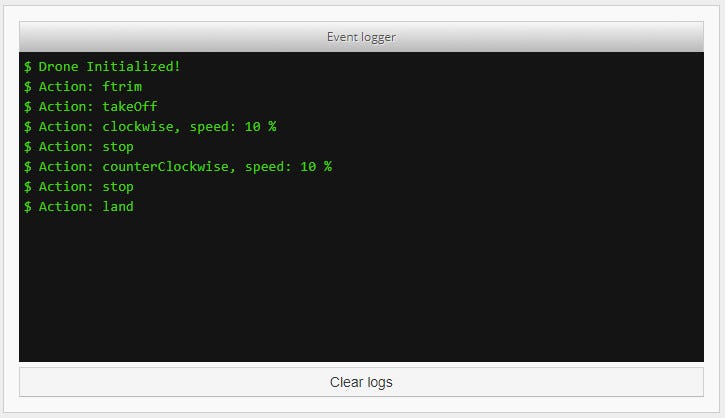

Event logger

Another handy feature is the web-based even logger console for displaying real-time logs from APIs:

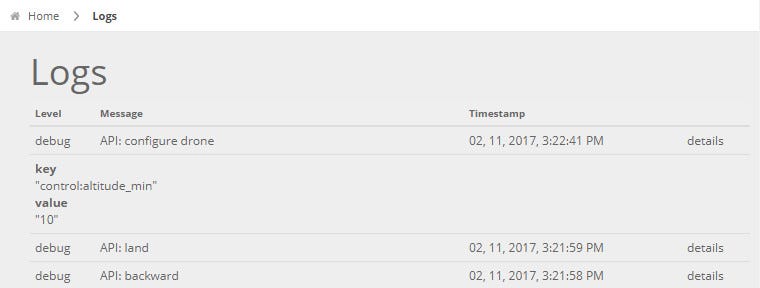

I created Logs page containing more details. Basically, here s what I did:

- used winston-logger to log everything to a file

- used winston-display-log library to display logs in form of HTML:

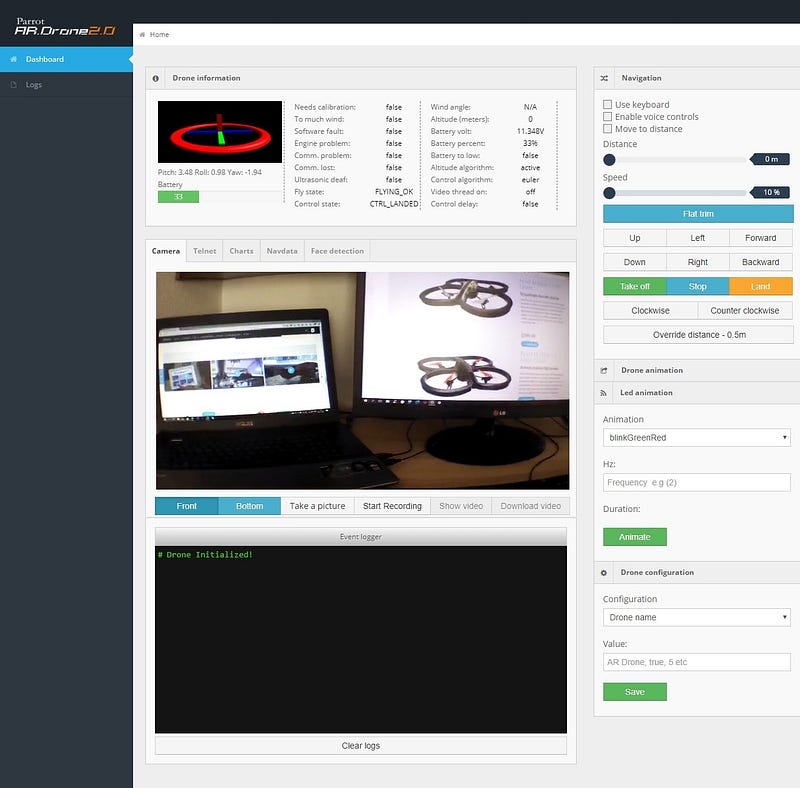

Finally, this is the screenshot of the whole app. I think it s really cool!

Look at the picture of AR Drone 2.0 in the action 🙂

What s next?

There are lots of possibilities, libraries, and frameworks left to explore! You just need to choose which direction to go. Here are some options to inspire your next step:

Ar Drone libraries

Cylon.js – JavaScript robotic framework. Currently, the AR Drone 2.0 is supported.

EZ Builder is a powerful tool for controlling different types of robots. It supports AR Drone 2.0 and has a lot of cool features like object and color tracking.

LeapJS is a JavaScript framework for controlling objects with gestures. You can control a drone with your hands and there are a lot of examples of how to do this.

Microsoft cognitive service

Face recognition API – Create face recognition apps using images from drone camera.

Computer vision API – Extract rich information from images to categorize and process visual data.

Video API – intelligent video processing produces stable video output, detects motion, creates intelligent thumbnails, and detects and tracks faces.

Computer vision and image processing libraries

Open CV – (Open Source Computer Vision Library) is released under a BSD license and hence it s free for both academic and commercial use.

References:

- https://github.com/felixge/node-ar-drone

- https://github.com/wiseman/ar-drone-rest

- http://eschnou.github.io/ardrone-webflight/

- https://github.com/eschnou/ardrone-autonomy

- http://www.playsheep.de/drone/tut4NavData.html

- http://blacktieaerial.com/the-physics-of-quadcopter-flight/

- https://github.com/yolanother/AR.FreeFlight/blob/master/Docs/ARDrone_Developer_Guide.pdf

- https://home.lundogbendsen.dk/wp-content/uploads/2016/09/FF-AR-Parrot-2-drone.pdf

- https://azure.microsoft.com/en-us/services/cognitive-services/

- http://www.lifehack.org/403615/6-awesome-ways-drones-a8+e-being-used-today

- https://www.fool.com/investing/2017/06/24/what-are-drones-currently-being-used-for-today.aspx